Australian workplaces are beginning to trial AI agents—a new wave of workplace automation tools designed to do more than generate text. Unlike standard chatbots, these systems can take actions across business software, such as drafting emails, updating spreadsheets, scheduling meetings, or processing basic customer service requests.

AI agents are being pitched as a way to cut repetitive tasks and lift productivity, particularly in admin-heavy roles. Employers and tech vendors say the tools can reduce time spent on routine coordination and reporting, freeing staff to focus on higher-value work.

In practice, an AI agent can operate like a digital assistant connected to workplace apps. It may pull information from calendars or customer databases, create summaries from meeting notes, and trigger simple workflows—often with a human approving the final step. That “connected access” is also where many of the risks sit.

Cybersecurity and privacy experts warn that giving automated systems broader access to business accounts increases the impact of mistakes and breaches. If an agent sends the wrong email, shares sensitive data, or acts on incorrect information, organisations still carry responsibility—especially when personal information is involved.

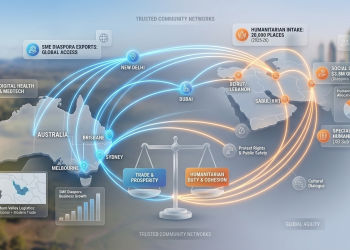

For Australia’s multicultural workforce, the change also has a skills dimension. Employers may need to invest in digital skills training so workers across language backgrounds and job types can use new tools safely and confidently, rather than being locked out of opportunities as workflows shift.

The wider impact is clear: AI agents signal a move from “AI that advises” to AI that acts in everyday work. As adoption grows, organisations will face pressure to set clear rules on accountability, data access, and fair workplace change—so workplace automation improves services and productivity without widening inequality or undermining trust.